See it in action.

Jinja2 templates + Tailwind, with async polling and SSE streaming baked into the UI for that "AI thinking live" feel.

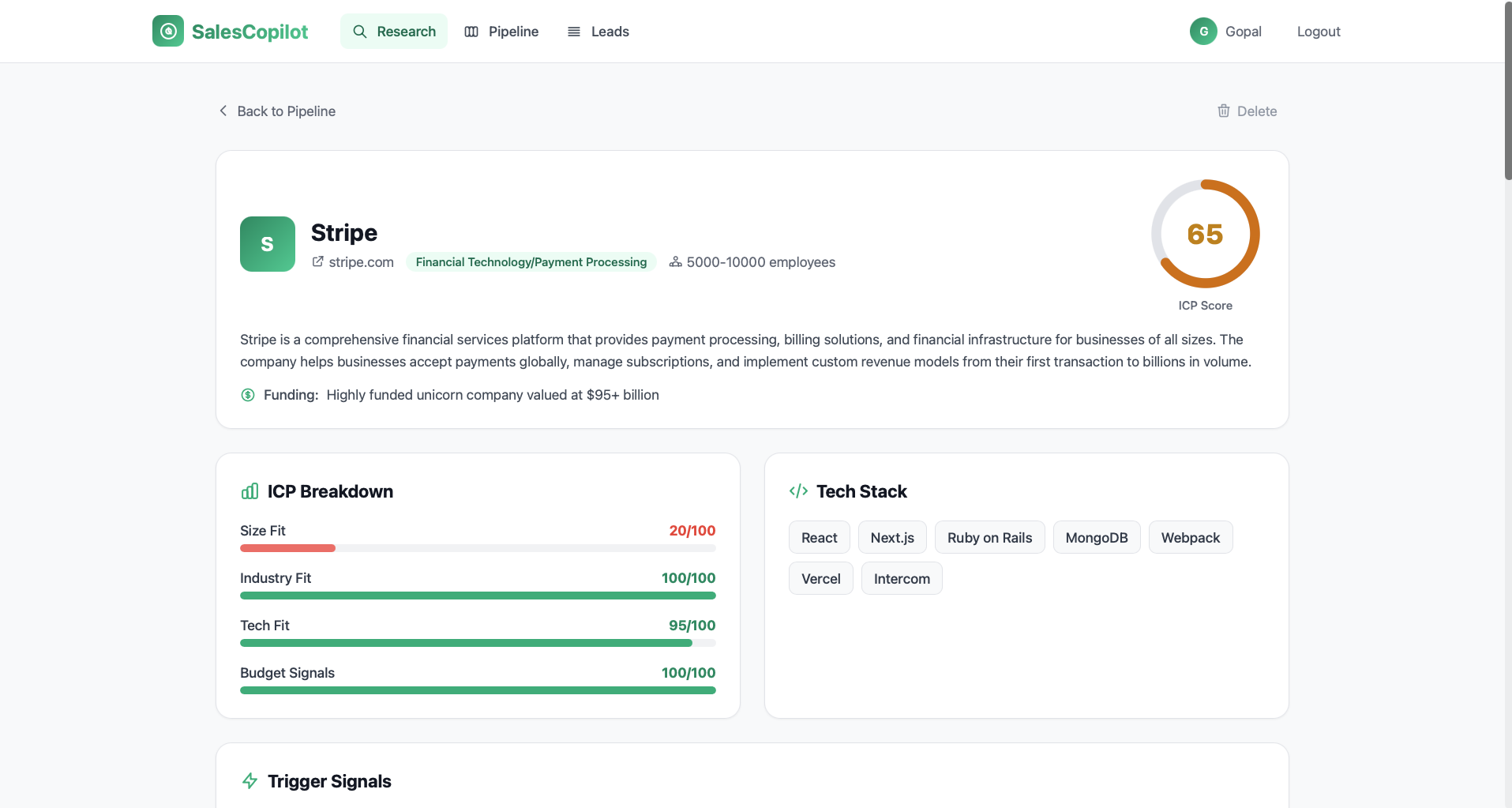

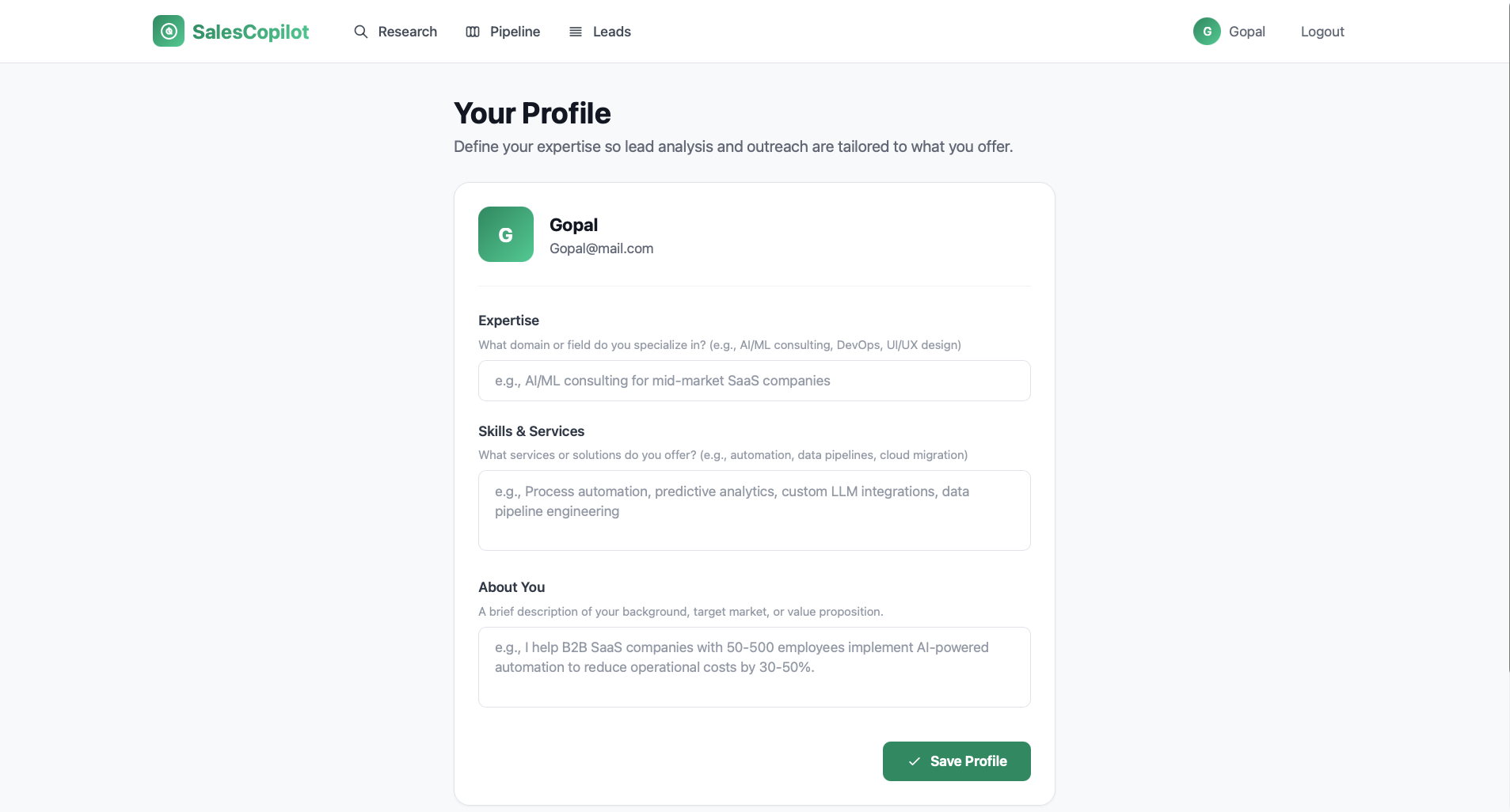

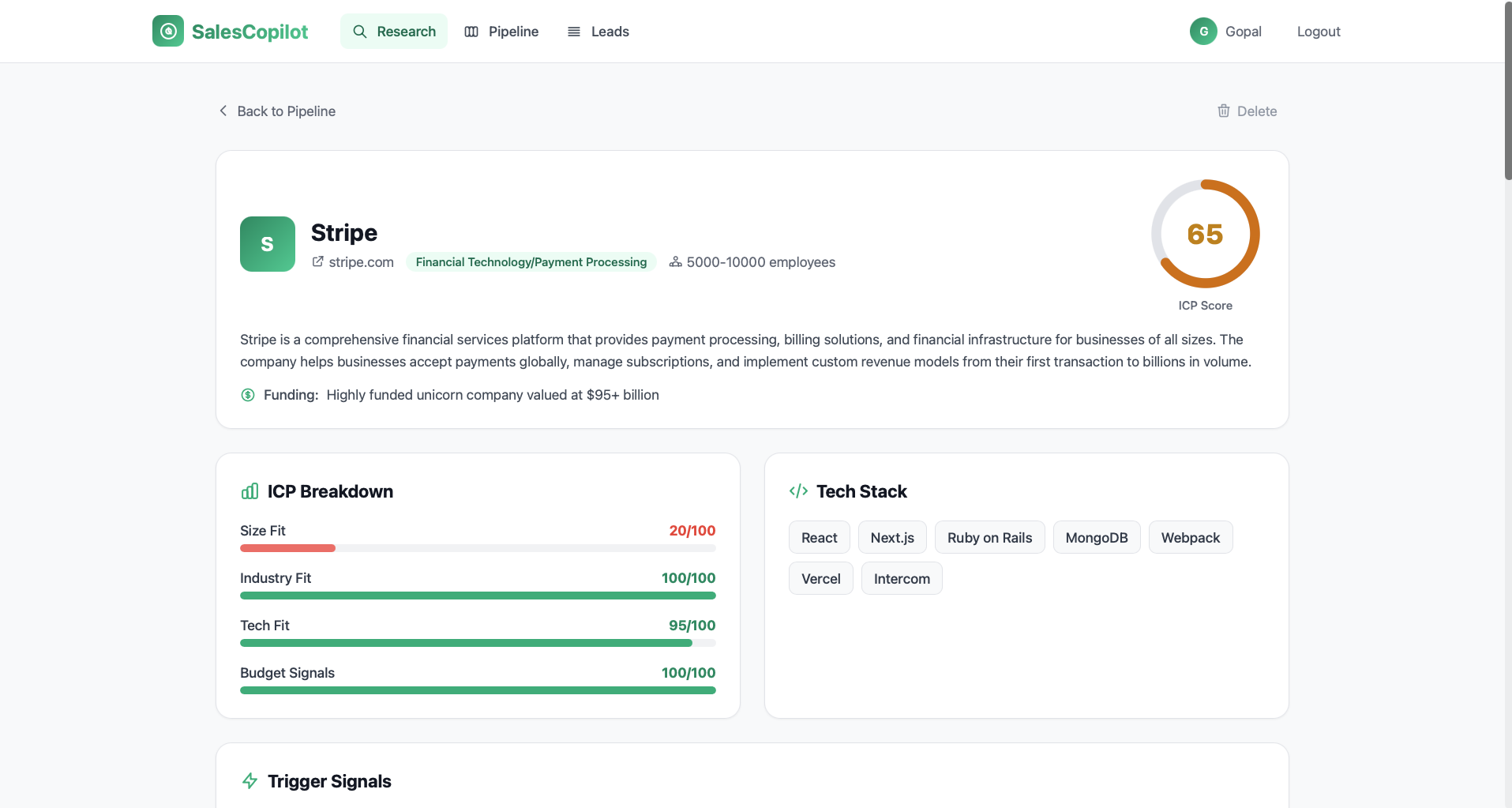

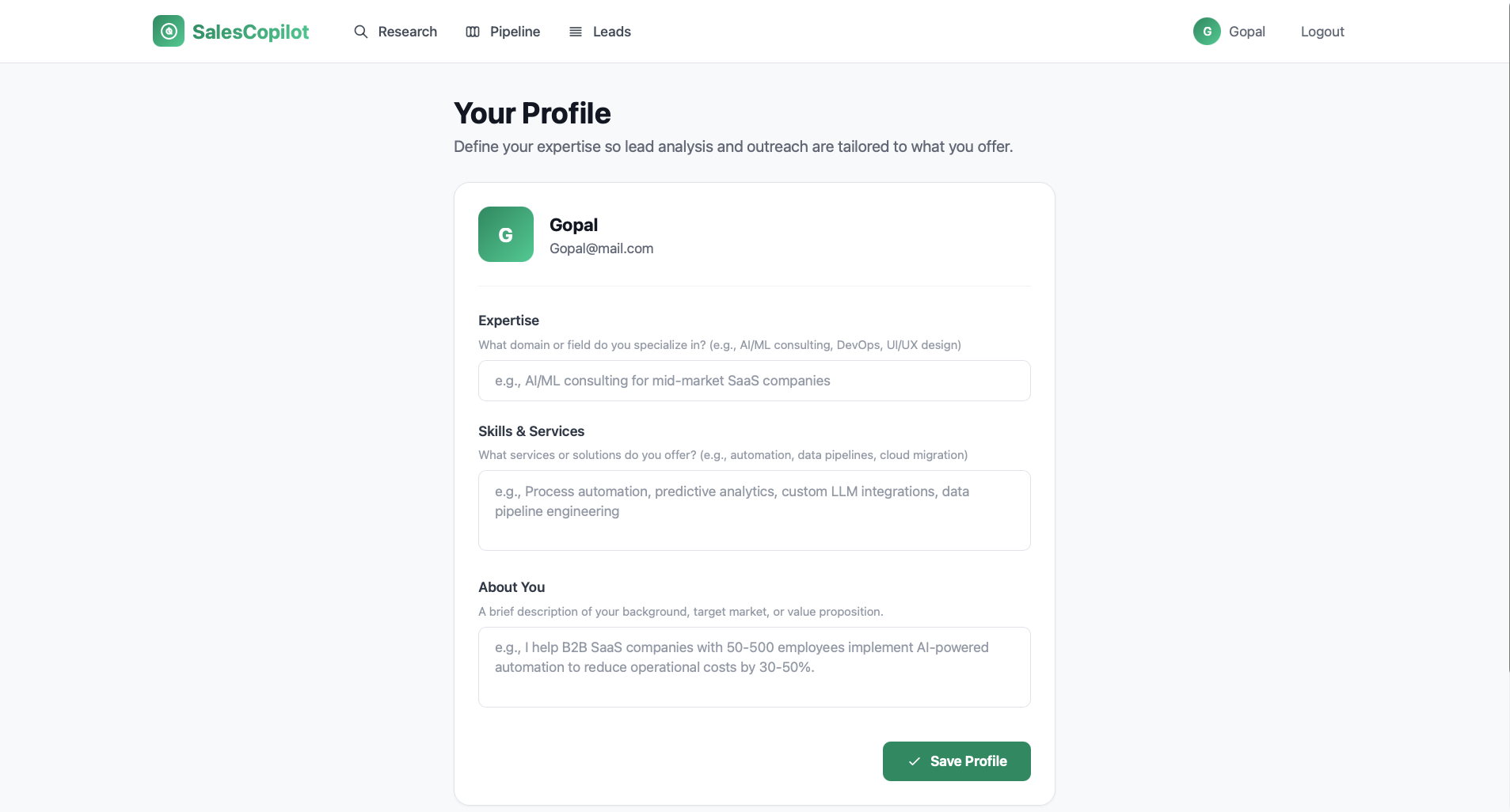

A Claude-powered sales copilot that turns a company link into instant ICP score, decision-makers, buying signals, and a personalized outreach email — streamed into your dashboard in under 10 seconds.

Every single prospect starts with the same grind: open a tab, scroll a website, google the funding round, hunt for a decision-maker, hand-write a cold email. Multiply by 15 a day.

Website, LinkedIn, funding search, headcount check, pick 2-3 contacts, draft an email. Every single time.

Ten open tabs per company, nothing written down, state lost when you switch to the next one.

Time pressure kills personalization. Reps fall back on templates that ignore real signals like hiring or funding.

When the meeting gets booked, the rep is back in the tabs — rebuilding context they never captured.

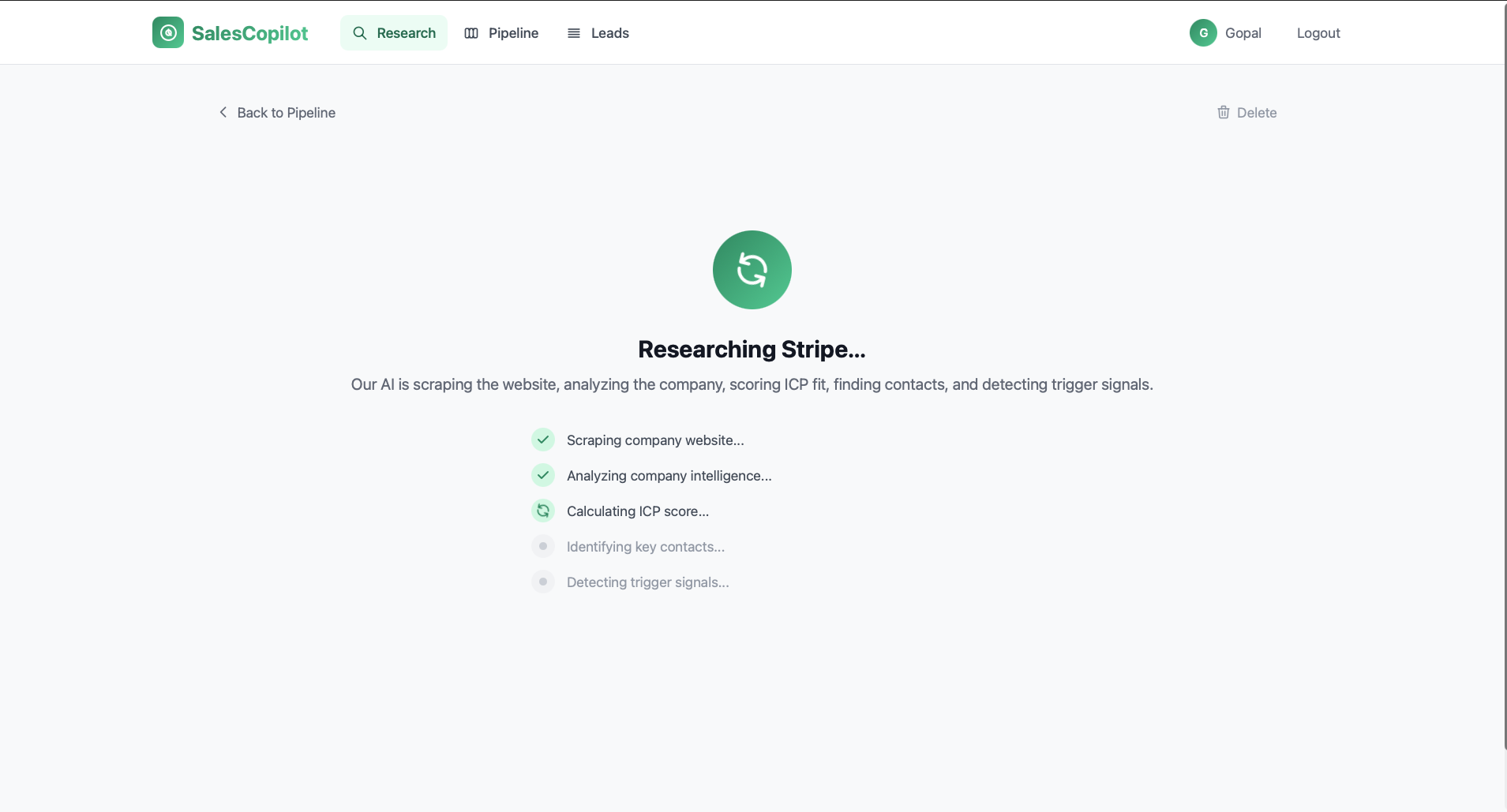

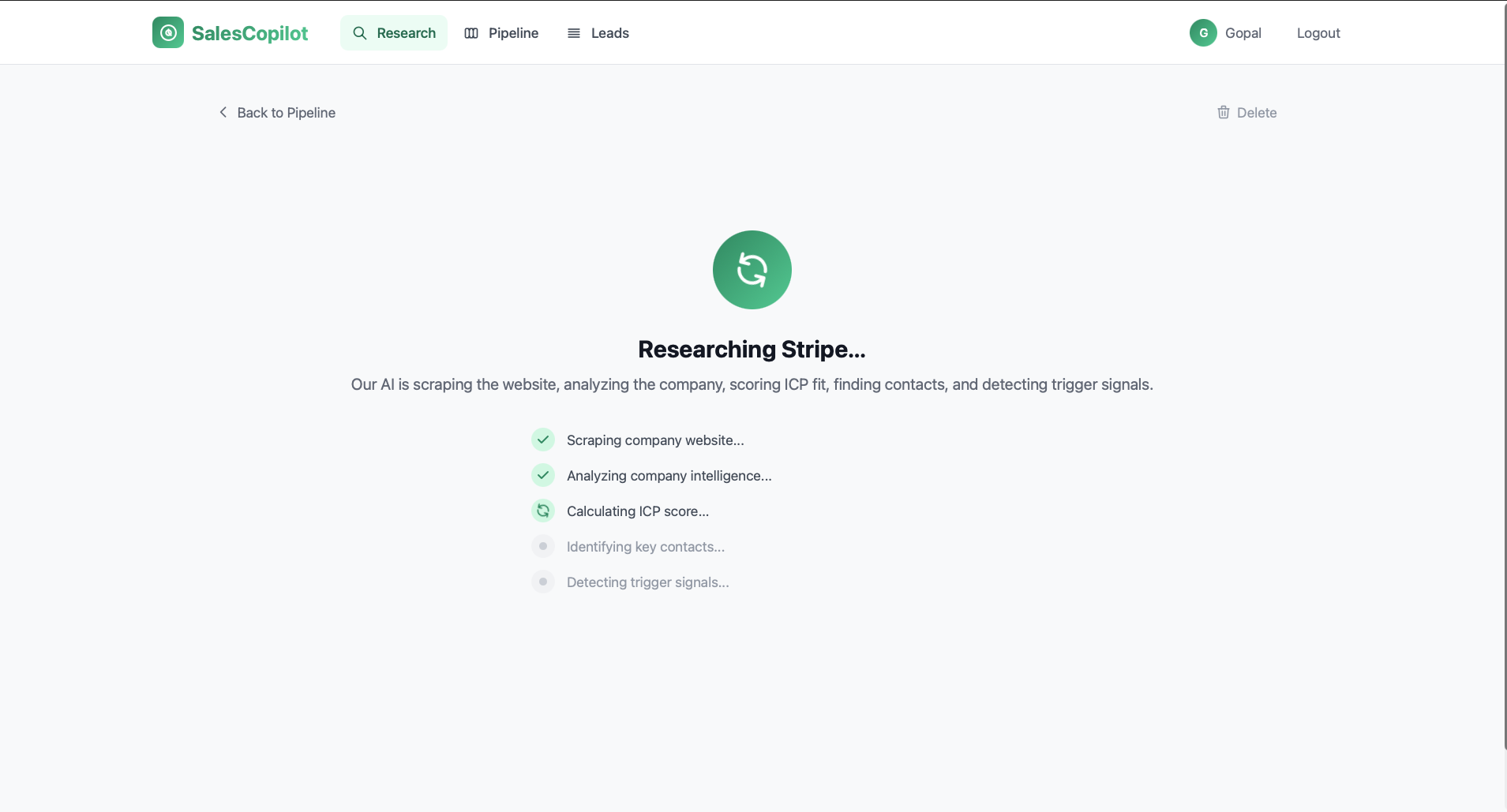

A single paste kicks off an async pipeline that scrapes, analyzes, scores, and drafts — rendering into the dashboard as Claude finishes each step.

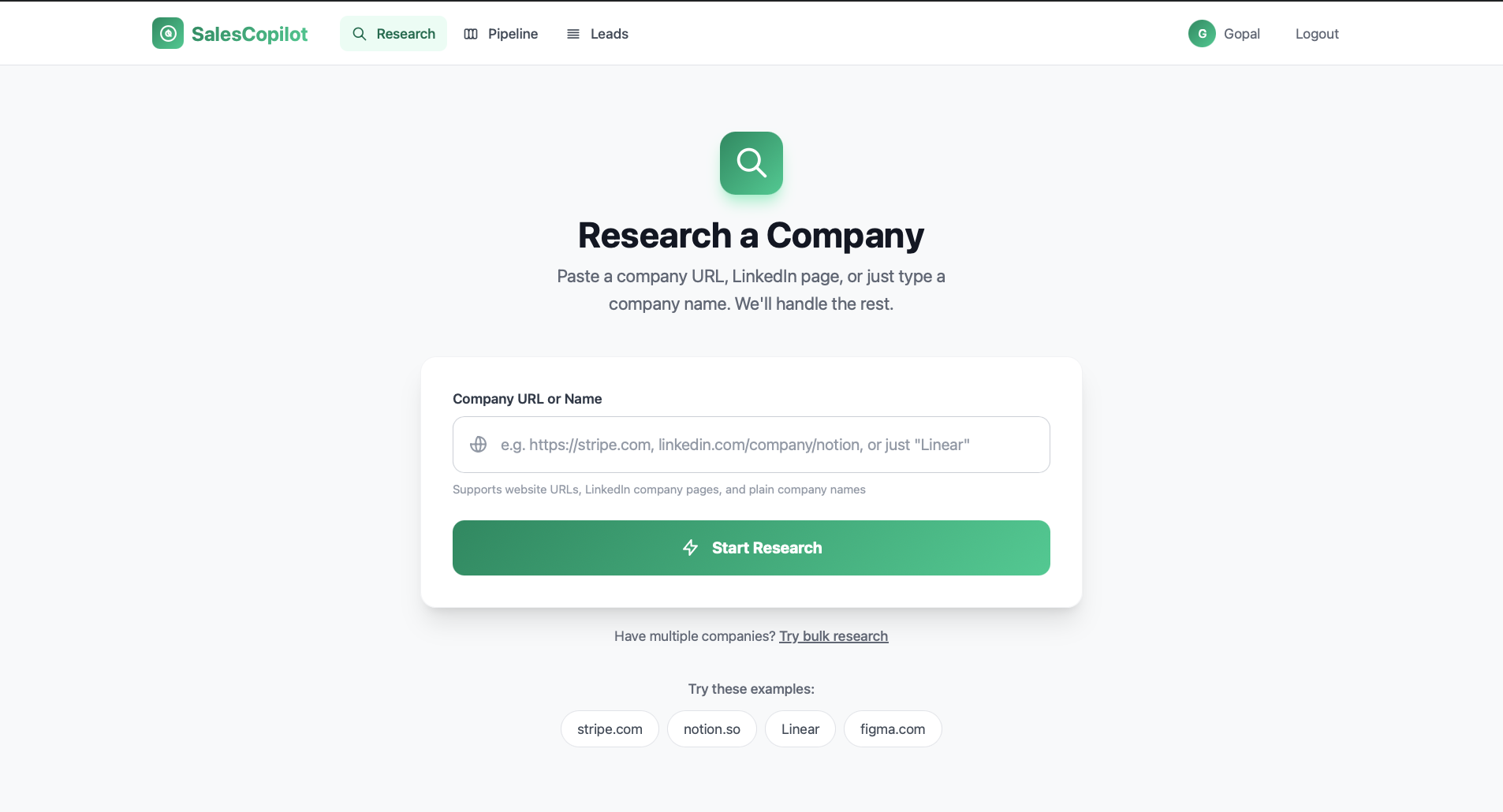

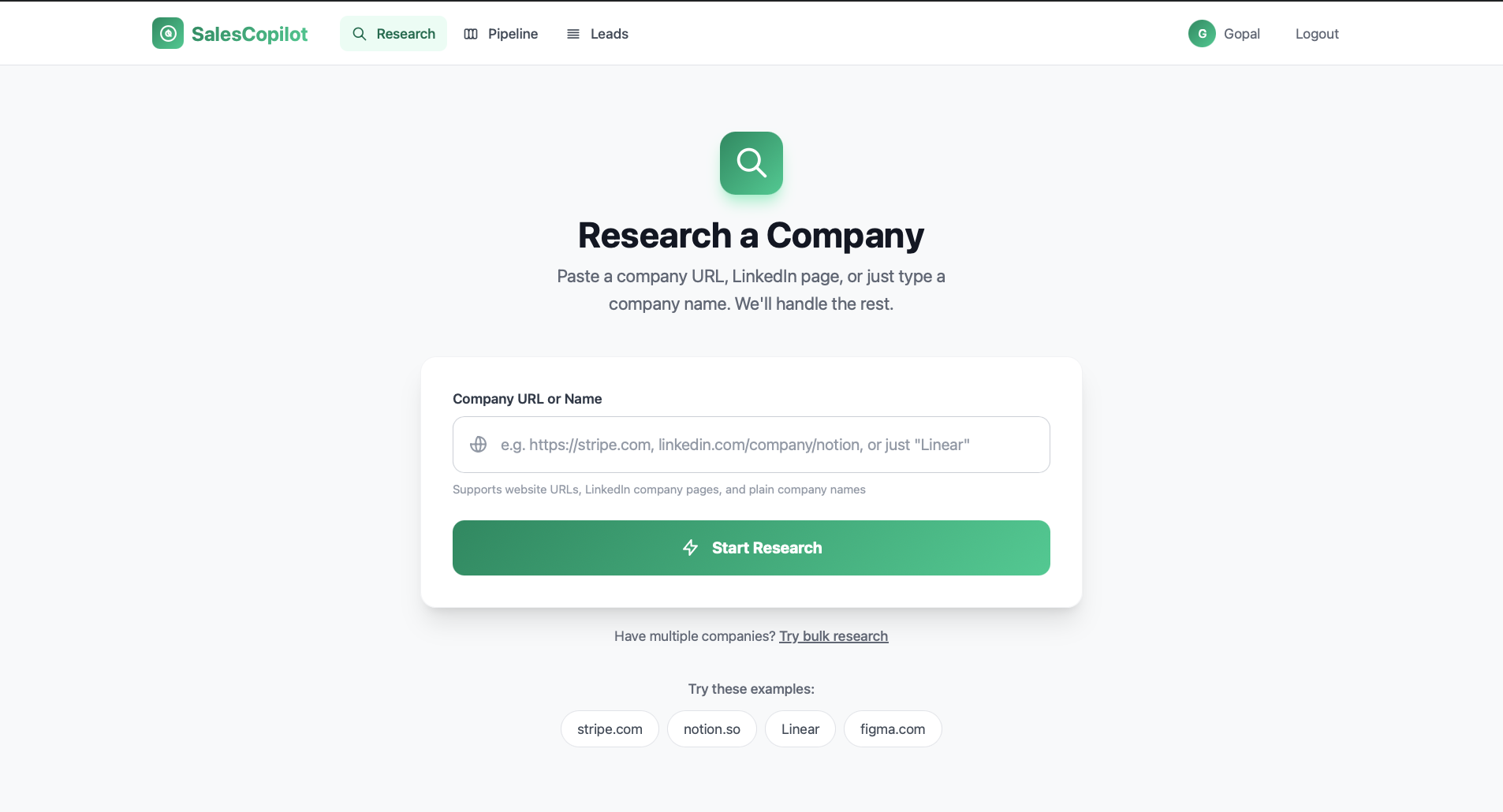

Website, LinkedIn, or a plain name. Auto-detected and routed to the right pipeline.

BeautifulSoup extracts the raw; Claude Sonnet 4 turns it into structured company intelligence.

Three Claude calls fan out in parallel: fit score (0–100), 3–5 decision-makers, 3–5 buying signals.

Pick a tone. Claude streams a subject + body via SSE, or a full markdown meeting brief — live in the UI.

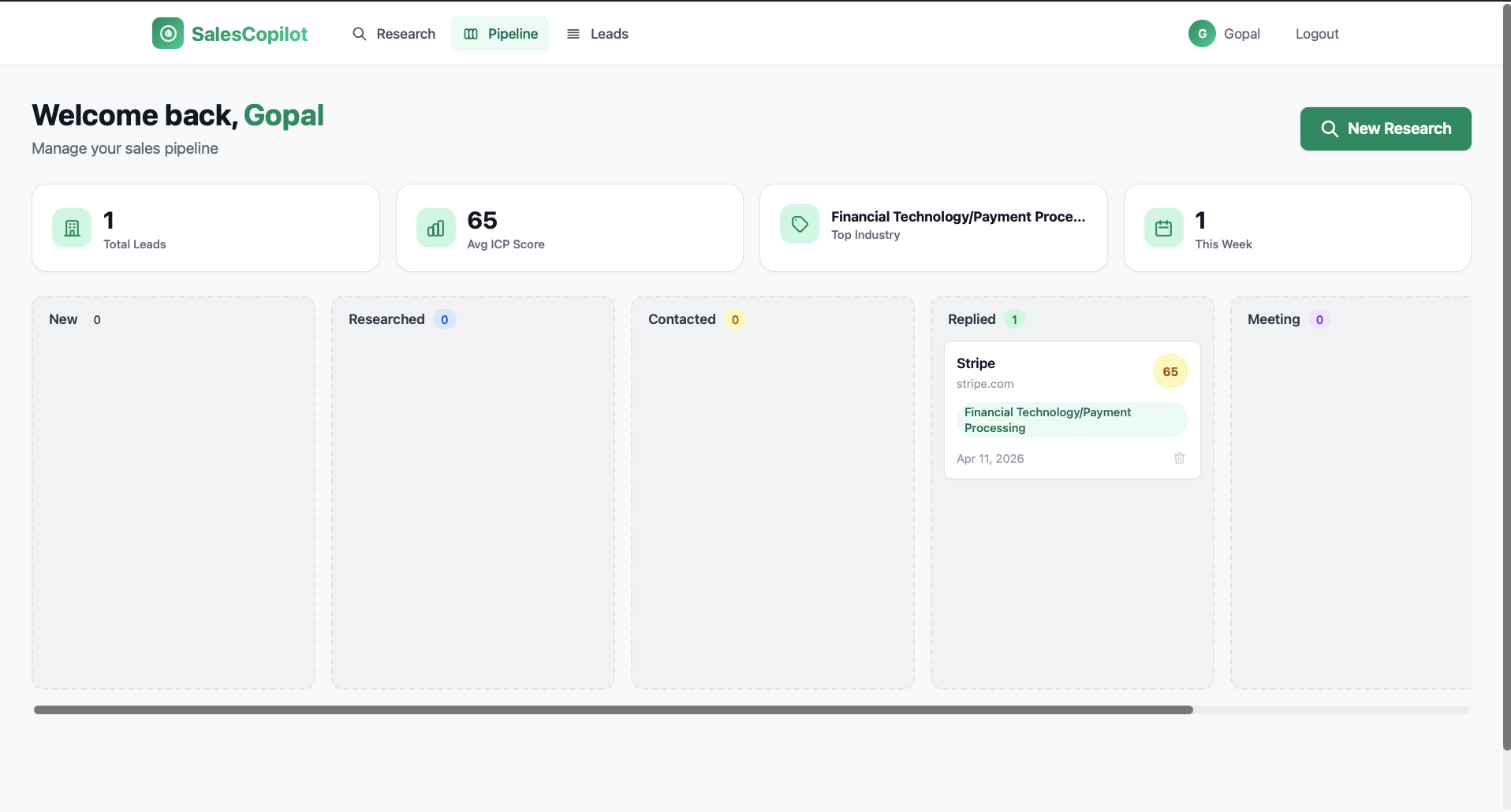

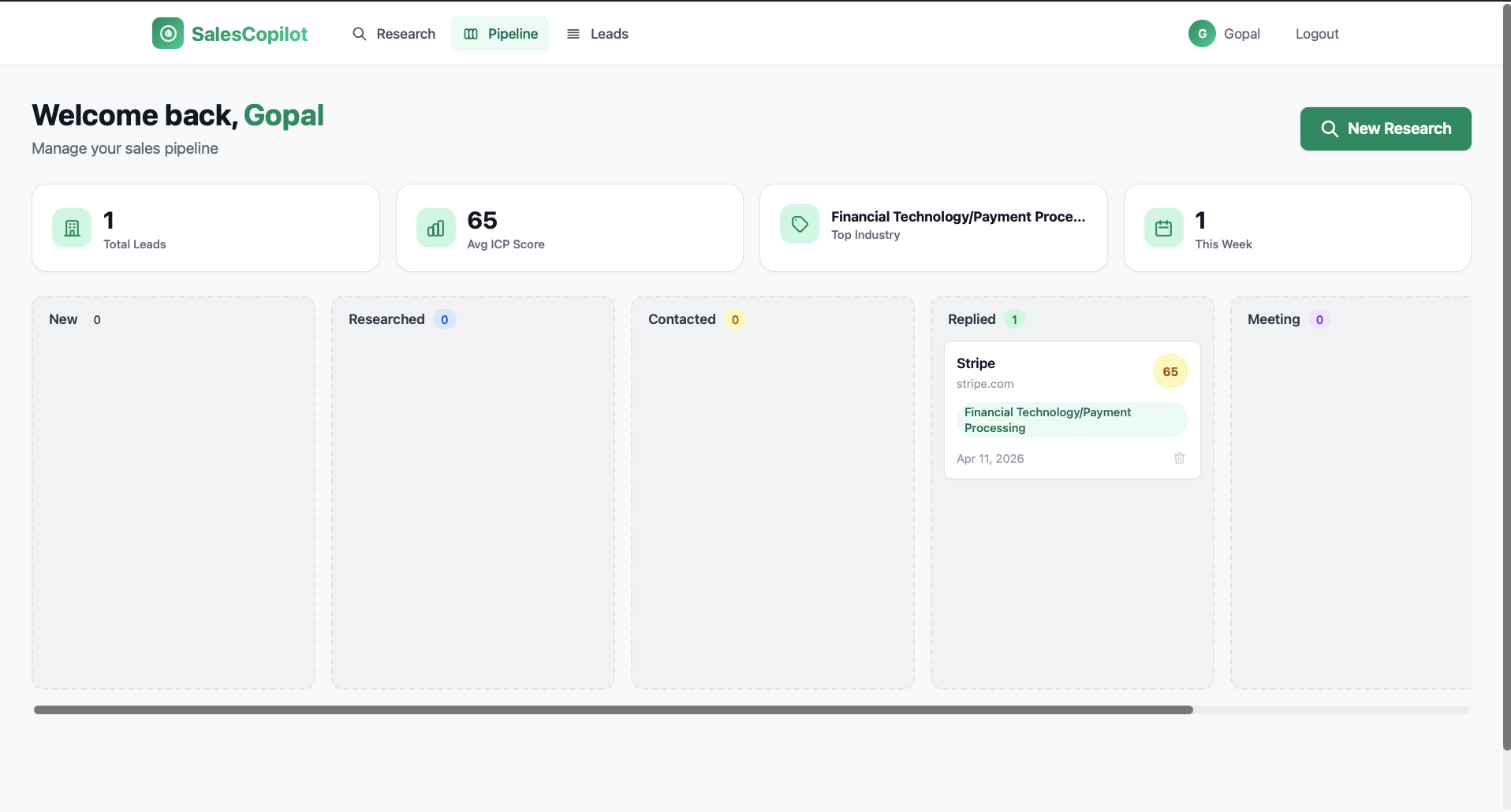

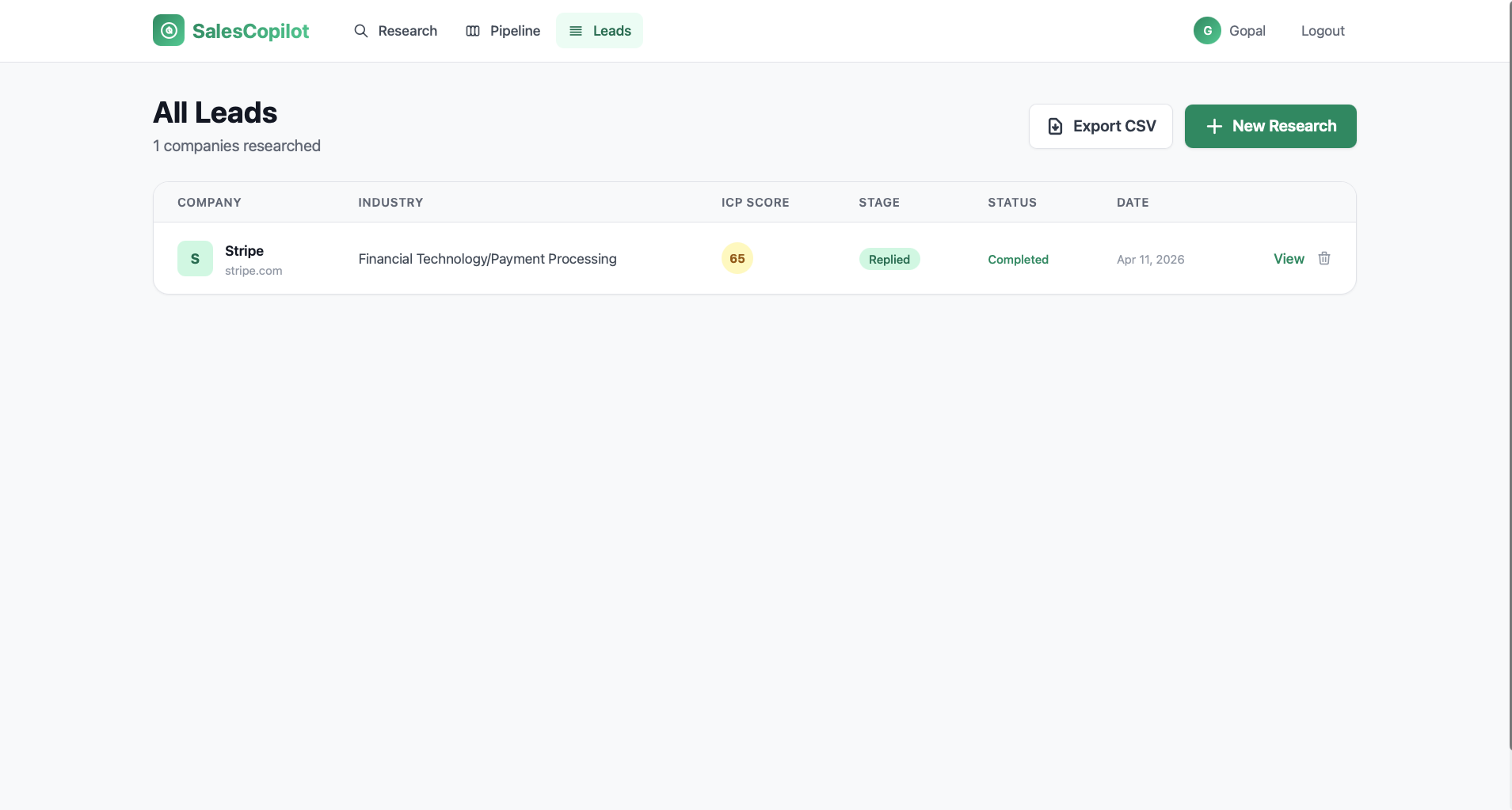

Drag from new → researched → contacted → replied → meeting → closed. Timestamps auto-logged.

# Async enrichment — runs in background task

async def enrich_lead(lead_id: UUID):

lead = await get_lead(lead_id)

# 1. Scrape — httpx + BeautifulSoup

scraped = await scraper_service.scrape_company(lead.url)

# 2. Company analysis — sequential, blocks the rest

company = await ai_service.analyze_company(scraped)

# 3. Score + contacts + triggers in parallel

icp, contacts, triggers = await asyncio.gather(

ai_service.score_icp(company, user_profile=profile),

ai_service.identify_contacts(company, scraped),

ai_service.detect_triggers(company, scraped),

)

# 4. Persist everything

await db.add_all([

ICPScore(lead_id=lead.id, **icp),

*[Contact(lead_id=lead.id, **c) for c in contacts],

*[TriggerSignal(lead_id=lead.id, **t) for t in triggers],

])

lead.enrichment_status = "completed"

lead.pipeline_stage = "researched"

await db.commit()Clean MVC with FastAPI async all the way down — backgrounds task for enrichment, Server-Sent Events for generation, polling for status.

BeautifulSoup scraper feeds Claude, which extracts structured company profile: domain, industry, size, funding, logo.

0–100 overall plus size · industry · tech · budget dimensions. Adapts to your expertise and target profile.

Claude surfaces 3–5 contacts with name, title, LinkedIn search URL, and a plausible email pattern if none were scraped.

Hiring, funding, expansion, product launch, leadership change — each flagged with high / medium / low severity.

Pick a tone, watch Claude write a subject + body into the editor in real time via Server-Sent Events.

References the previous email, adds new value, never repeats the pitch. Same tone control as outreach.

Markdown brief: company summary, talking points, discovery questions, objection handlers. Streamed live into the UI.

Generates a <300-char connection note and a longer follow-up InMail, both referencing specific triggers and signals.

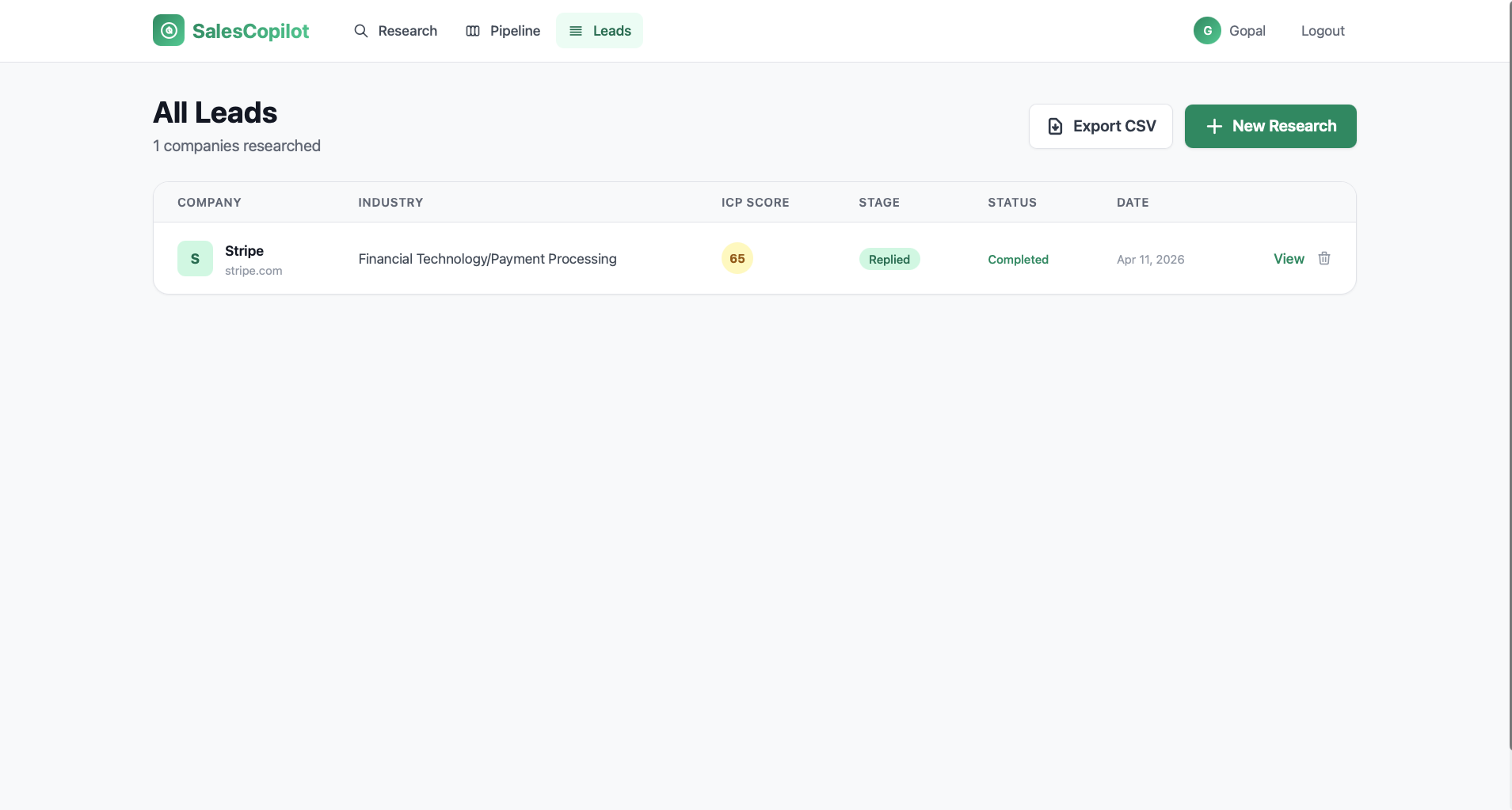

Drag-drop 6-stage kanban, bulk research by paste or CSV with live progress, one-click CSV export.

ai_service.py is not just an SDK wrapper — it's a battle-tested orchestrator with concurrency caps, retry logic, nested-JSON unwrapping, and real-time streaming.

# Streaming email generation via SSE

async def stream_outreach_email(

company: dict,

icp: dict,

triggers: list,

tone: str = "professional",

user_profile: dict | None = None,

):

seller = _build_seller_context(user_profile)

system = f"Expert cold email copywriter.\n{seller}"

async with _sem:

async with _client.messages.stream(

model=settings.anthropic_model,

max_tokens=4096,

system=system,

messages=[{

"role": "user",

"content": _build_email_prompt(

company, icp, triggers, tone,

),

}],

) as stream:

async for chunk in stream.text_stream:

yield f"data: {chunk}\n\n"

yield "event: done\ndata: [END]\n\n"Company website, LinkedIn, or a bare name. System auto-detects and creates a Lead row.

asyncio.create_task() fires off scraping with httpx + BeautifulSoup. Request returns instantly.

Claude converts raw HTML into structured company profile — name, industry, size, funding, tech, logo.

Three Claude calls in parallel via asyncio.gather(). Scored, ranked, persisted.

UI polls /api/leads/{id}/status every 1.5s. Cards fade in as data arrives — live AI reveal.

Pick a tone. Claude streams subject + body directly into the editor via Server-Sent Events.

One click each for a thread-aware follow-up, a LinkedIn connection note + InMail, or a markdown meeting brief.

Drag the card new → researched → contacted → replied → meeting → closed. Stage timestamps auto-recorded.

Jinja2 templates + Tailwind, with async polling and SSE streaming baked into the UI for that "AI thinking live" feel.

Every cold lead starts warmer — full intelligence, personalized outreach, meeting prep, and a LinkedIn message, all generated before the rep finishes their coffee.